Executive Summary

Artificial Intelligence (AI) is introducing a new class of enterprise risk. These systems do not produce verified answers or exercise judgment — they generate probabilistic outputs that appear credible but are not inherently reliable. When embedded in enterprise workflows, this creates AI risk in decision-making, where decisions are increasingly influenced by outputs that have not been validated for accuracy or context.

Risk shifts from system failure to decision integrity. Organizations continue to operate, but decision quality can degrade over time as plausible but incorrect outputs enter analysis, recommendations, and operations. The challenge is managing AI decision risk at scale while maintaining reliability and control.

Governing AI risk requires changing how outputs are used in decision processes. AI-generated content must be treated as input, not decision. Generation must be separated from validation. Decision ownership must remain explicit. Outcomes must be continuously monitored to detect errors and drift.

The objective is not to eliminate AI risk or make AI perfectly reliable. It is to control how AI influences decisions, ensuring that even when outputs are uncertain, the decisions built on them remain reliable, accountable, and defensible.

AI Risk Starts in Decision-Making

AI is already embedded in enterprise workflows—supporting analysis, generating recommendations, and influencing decisions. The issue is not whether these systems are useful. It is how they are used.

AI-generated outputs are entering decision processes—often without consistent validation. They are treated as reliable, even though they are not designed to guarantee correctness. It introduces a different type of AI risk in enterprise decision making—one that does not come from system failure, but from how decisions are made.

AI systems influence what information is produced and how it is presented. They do not operate on truth, reasoning, or judgment. They operate on probability, generating outputs that are plausible rather than verified.

AI is not intelligence—it is a probabilistic system that generates plausible outputs without understanding what is true.

Plausible outputs, when treated as reliable inputs, shift how decisions are made. Decisions begin to rely on information that has not been validated. Over time, this creates AI decision risk, where decisions appear well-supported but are built on uncertain foundations.

Decision quality depends on determinism—consistent logic, repeatable outputs, and verifiable correctness. With AI, that foundation shifts. Outputs vary. Errors are not always visible. Plausibility becomes the signal that drives trust. Organizations now face a structural change in how they must manage AI risk and ensure AI reliability in decision making. The question is no longer whether AI can produce useful outputs. It clearly can. The real question is: How do you govern AI risk and protect decision integrity when the systems influencing decisions cannot be trusted at face value?

This article explains how AI creates risk in enterprise environments, why traditional governance does not manage it effectively, and how organizations can manage AI risk, validate AI outputs for decisions, and use AI safely in enterprise decision making at scale.

What AI Actually Is (and What It Isn’t)

Artificial Intelligence (AI) systems used in the enterprise do not evaluate truth, apply judgment, or understand meaning.

AI is a probabilistic output generator. It produces likely responses, not verified results.

These systems generate outputs by predicting what is most likely given an input, based on patterns learned from data. AI outputs are well-formed, coherent, and confident. They are easy to read, easy to use, and easy to trust. The system producing them has no mechanism to determine whether the output is correct. Information enters decision processes differently. AI systems do not produce verified answers. They produce candidate outputs.

AI changes the problem from producing answers to determining which outputs can be trusted.

This is where AI decision risk begins. Outputs are designed to be plausible. Plausibility can look indistinguishable from correctness, especially at scale. An output can appear accurate, be internally consistent, align with expectations—and still be wrong. Outputs can look correct even when they are wrong. Decisions begin to rely on information that has not been verified. Over time, this undermines AI reliability in enterprise decision making. To manage AI risk, validate AI outputs for decisions, and ensure AI reliability, outputs must be treated as unverified inputs until they are explicitly validated. This is not a limitation to work around. It is a defining property of how these systems function.

How AI Creates Risk in Enterprise Decision-Making

AI does not introduce risk through isolated failures. It changes how risk enters enterprise decision making. The impact develops through how outputs are perceived, used, and propagated across workflows. Three patterns explain how AI risk in enterprise decision making develops and scales: outputs appear more reliable than they are, they are produced faster than they can be verified, and once used, they spread across decisions. AI outputs are structured, fluent, and confident. They create the impression of competence—and that impression is often mistaken for reliability.

AI does not fail consistently—it fails unpredictably, which makes its errors harder to detect.

Trust forms quickly and carries forward even when output quality changes. Past success does not predict future accuracy. AI systems generate large volumes of content—analysis, summaries, recommendations, and code. Output increases rapidly. The ability to verify it does not.

AI reduces the cost of generating outputs, but not the cost of verifying them.

A structural imbalance emerges. As output volume increases, validation becomes constrained. Unverified outputs enter decision workflows because time is limited, outputs appear credible, and validation is assumed rather than performed. You can produce more—but you cannot check more. AI reliability risk increases at this point. Errors are often embedded within otherwise correct outputs, making them difficult to detect without careful review. Once unverified outputs enter workflows, they propagate. AI-generated content is reused in reports, incorporated into analysis, and used to support recommendations. One unverified output can influence multiple decisions, and errors accumulate.

AI does not break systems—it quietly degrades the quality of decisions.

There is no outage. No alert. No visible failure. Organizations continue to operate, but decisions are increasingly based on uncertain inputs. AI risk is distributed across decision processes. Managing AI risk in enterprise decision making requires controlling how outputs are validated, where they enter workflows, and how they influence decisions at scale.

Why Traditional Governance Doesn’t Manage AI Risk Effectively

Enterprise governance models were designed for systems that behave predictably. They assume outputs are consistent, traceable, and verifiable. AI systems do not meet those assumptions. Outputs vary. Errors are not always visible. Tracing how a result was produced is often difficult, and correctness cannot be assumed without validation.

AI is not failing governance—governance is failing to account for how AI systems actually behave.

A structural gap emerges. Controls designed for deterministic systems do not prevent AI risk in enterprise decision making, because they operate at the wrong level. Traditional governance focuses on system performance, access control, data quality, and compliance. These remain necessary, but they do not control how AI outputs influence decisions. Risk emerges when unverified outputs shape analysis, recommendations, and actions. Governance frameworks operate at checkpoints—before deployment or through periodic review. AI operates continuously. Static controls do not match dynamic behavior. Decision processes become the point of exposure. Managing AI decision risk requires shifting governance from systems to decisions.

How to Govern AI Risk and Protect Decision Integrity

Managing AI risk in enterprise decision making depends on how outputs are controlled within decision processes. AI-generated outputs should be treated as inputs—unverified until proven otherwise.

AI cannot be made perfectly reliable, but its impact can be systematically controlled.

Outputs must be separated from decisions. Generation and validation cannot be the same step. Outputs require independent review before use. Ownership must remain explicit. Every decision must have a defined owner accountable for the outcome. Validation must be continuous. AI behavior changes over time, and verification must occur during use—not just before deployment.

Use must be constrained. AI should be applied where outputs can be validated with reasonable confidence. These controls do not eliminate AI risk. They prevent it from degrading decision integrity.

A Governance Model to Control AI Risk in Decision-Making

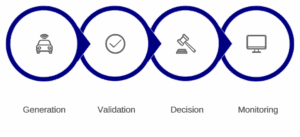

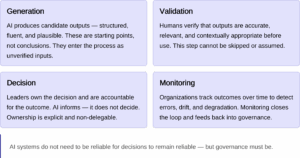

Managing AI risk in enterprise decision making requires a structured model that governs how outputs are generated, validated, and used. The model centers on four functions: generation, validation, decision, and monitoring.

Governance Flow

Operating Model

AI generates → Humans validate → Leaders decide → Organizations monitor

- Generation produces candidate outputs.

- Validation ensures outputs are accurate and usable.

- Decision maintains ownership and accountability.

- Monitoring tracks outcomes and detects emerging risk.

AI systems do not need to be reliable for decisions to remain reliable—but governance must be.

How to Manage AI Risk in Decision-Making

Managing AI risk in enterprise decision making requires discipline in how outputs are introduced, validated, and used. The starting point is defining where AI can be used. Not every decision is suitable for AI-generated input. High-impact decisions require tighter control.

- AI outputs should be treated as inputs, not conclusions. They inform analysis but do not replace verification.

- Validation must be built into the workflow. Outputs must be checked for accuracy, relevance, and context before use.

- Ownership must remain explicit. AI can influence decisions, but accountability remains human.

- Monitoring completes the loop. Outcomes must be reviewed to detect errors and drift.

AI generates outputs. People validate them. Leaders own decisions. Organizations monitor outcomes. Managing AI risk at scale depends on controlling how outputs move through decision processes.

What This Means for CIOs

AI is becoming part of how decisions are made across the enterprise. The role of the CIO shifts from deploying systems to managing how those systems influence outcomes. AI must be treated as a source of decision risk, not just automation. Outputs accelerate work—but they introduce uncertainty that must be controlled.

The objective is not to make AI intelligent. It is to ensure that decisions influenced by AI remain reliable.

Governance must move closer to the point of decision. Controls must operate within workflows. Monitoring must focus on outcomes. Organizations that succeed will not eliminate AI risk in enterprise decision making. They will manage how it enters, spreads, and affects decisions. Others will continue to operate—but with decisions shaped by outputs they do not fully control.